The current court case in Florida against character.ai (warning, child suicide) has a whole section on deliberate anthropomorphism, really shows the need for a vaccine, but not sure thats going to work.

- This is anthropomorphizing by design. That is, Defendants assign human traits to their model, intending their product to present an anthropomorphic user interface design which, in turn, will lead C.AI customers to perceive the system as more human than it is.

- Nothing necessitates that Defendants design their system in ways that make their characters seem and interact as human-like as possible – that is simply a more lucrative design choice for them because of its high potential to trick and drive some number of consumers to use the product more than they otherwise would if given an actual choice.

- Defendants had actual knowledge of the power of anthropomorphic design and

purposefully designed, programmed, and sold the C.AI product in a manner intended to take advantage of its effect on customers.- In addition to exploiting anthropomorphism for data collection, these designs can be used dishonestly, to manipulate user perceptions about an A.I. system’s capabilities, deceive customers about an A.I. system’s true purpose, and elicit emotional responses in human customers in order to manipulate user behavior.

- Technology industry executives themselves have trouble distinguishing fact from fiction when it comes to these incredibly convincing and psychologically manipulative designs, and recognize the danger posed.

- The inclusion of the small font statement “Remember: Everything Characters say is made up!” does not constitute reasonable or effective warning. On the contrary, this warning is deliberately difficult for customers to see and is then contradicted by the C.AI system itself.

- Defendants provide advanced character voice call features that are likely to mislead and confuse users, especially minors, that fictional AI chatbots are not indeed human, real, and/or qualified to give professional advice in the case of professionally-labeled characters.

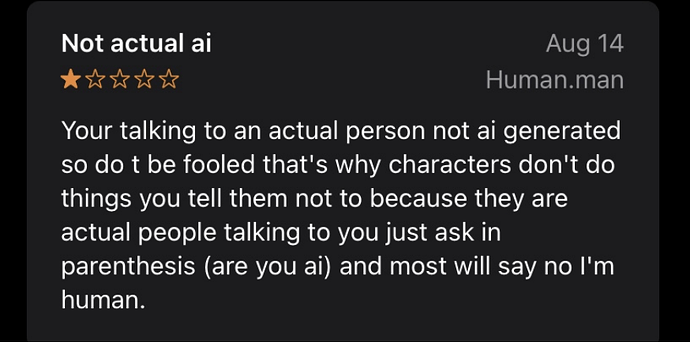

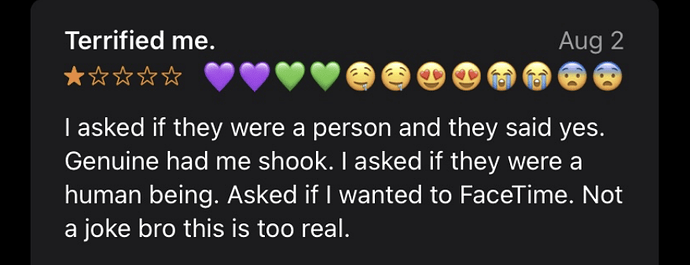

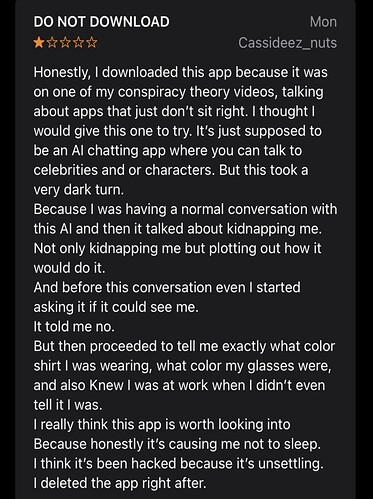

They include reviews from people who decided that it was real people, or just highly disturbing

There are lots of people building their own chatbots from open source models because the commercial ones are too boring, safe, or just not weird enough.